Apple AI Model Reconstructs 3D Objects With Realistic Lighting From a Single Image

Apple researchers have introduced LiTo, an AI model that generates full 3D objects from a single image while maintaining realistic lighting and reflections across different viewing angles.

Apple researchers have developed a new AI model that can reconstruct a full 3D object from a single image while preserving realistic lighting effects such as reflections and highlights. The work, introduced in the research paper LiTo: Surface Light Field Tokenization, aims to improve how machines represent both object geometry and view-dependent appearance in a unified system.

Model Encodes Geometry and Lighting in a Unified 3D Latent Space

The LiTo model introduces a 3D latent representation that captures not only the shape of an object but also how light interacts with its surface from different viewing angles. This approach addresses a limitation in prior methods, which typically focus either on reconstructing geometry or predicting simplified, view-independent appearance.

By encoding surface light field data into a compact set of latent vectors, the system can reproduce view-dependent effects such as specular highlights and Fresnel reflections under complex lighting conditions. This allows the reconstructed object to maintain consistent visual realism as the viewpoint changes.

System Reconstructs Full 3D Objects From a Single Image

Unlike traditional 3D reconstruction techniques that require multiple images from different angles, LiTo can infer a complete 3D representation from a single input image. The system achieves this through a two-stage process involving an encoder and a decoder.

The encoder compresses the object’s visual information into a latent representation, capturing both its structure and lighting behavior. The decoder then reconstructs the object, generating both its geometry and its appearance across different viewing angles.

A separate model is trained to predict the appropriate latent representation directly from a single image, enabling end-to-end reconstruction without requiring multi-view input data.

Training Uses Subsampled Multi-View and Lighting Data

To train the system, researchers used thousands of 3D objects rendered from 150 different viewing angles and three lighting conditions. Instead of feeding all available data into the model, the training process relied on randomly sampled subsets of surface light field data.

These subsets were encoded into latent representations, while the decoder was trained to reconstruct the full object and its appearance under varying conditions. Over time, the model learned to generalize both geometry and view-dependent lighting from partial information.

Latent Space Enables Efficient Representation and Reconstruction

The system relies on latent space, where visual information is represented as compact numerical vectors. This approach reduces computational cost while preserving essential relationships between object features and lighting behavior.

By operating in this compressed space, the model can efficiently infer how an object should appear under different viewpoints without explicitly storing every possible variation. This enables both faster processing and more consistent rendering results.

Comparisons Show Improved View-Dependent Rendering

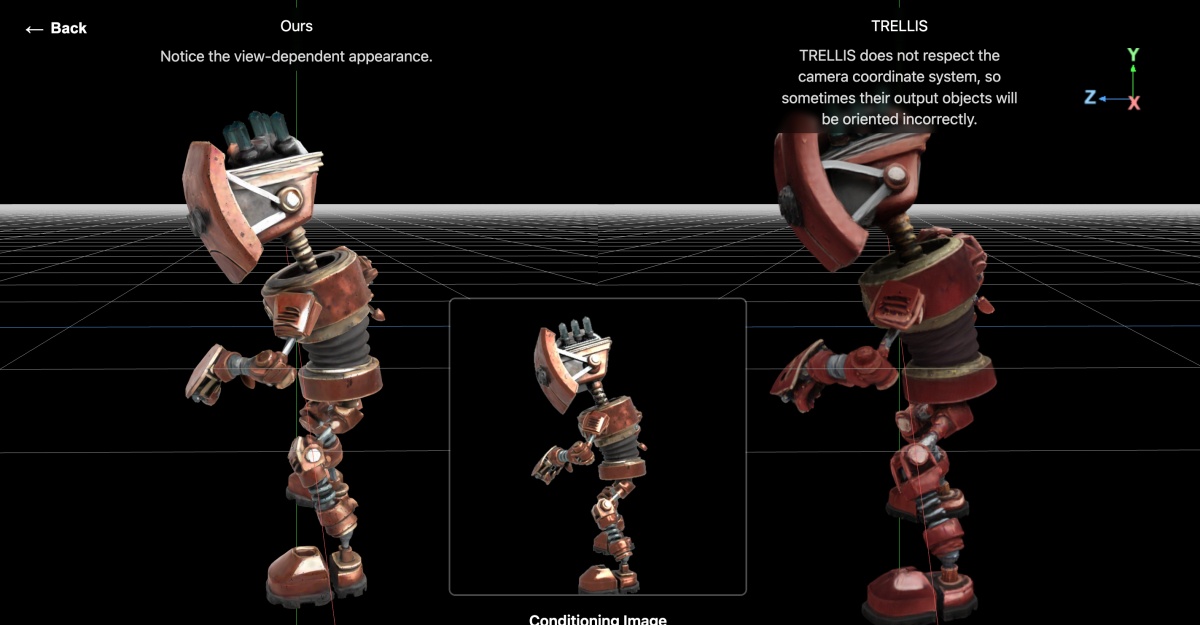

Apple’s research includes comparisons between LiTo and an existing model called TRELLIS. The results demonstrate that LiTo produces more consistent and realistic lighting effects when objects are viewed from different angles, particularly in scenarios involving complex reflections.

The study highlights the model’s ability to jointly represent geometry and appearance, which is critical for applications that require high visual fidelity across dynamic viewpoints.

Written by

Ravi Teja KNTSI’ve been writing about tech for over 5 years, with 1000+ articles published so far. From iPhones and MacBooks to Android phones and AI tools, I’ve always enjoyed turning complicated features into simple, jargon-free guides. Recently, I switched sides and joined the Apple camp. Whether you want to try out new features, catch up on the latest news, or tweak your Apple devices, I’m here to help you get the most out of your tech.

View all posts →